Artificial intelligence is rapidly becoming integral to court operations and legal practice. Yet despite AI’s growing presence in courtrooms across the nation, many legal professionals still have fundamental questions about its responsible implementation, potential risks, and practical applications.

Drawing from our comprehensive research into AI hallucinations and court usage, plus insights from 17 interviews with judges, legal experts, and technology specialists, we’re addressing the most pressing questions facing today’s legal professionals.

Jump to ↓

What are the real risks of AI hallucinations in legal practice?

How can courts balance AI adoption with accuracy requirements?

What does integrating AI into legal workflows look like?

How can AI improve access to justice?

What does responsible AI implementation look like?

Moving forward with AI in the courts

What are the real risks of AI hallucinations in legal practice?

AI hallucinations—instances where an AI system produces content that looks authoritative but is factually incorrect—pose serious risks for legal professionals. These errors can take many forms, including citing non‑existent cases, inventing statistics, presenting inconsistent information, or asserting inaccurate legal conclusions.

A major concern is that AI can make mistakes no human would, such as adding an extra element to a cause of action that has only three. Errors like these can slip past traditional review processes, creating hidden vulnerabilities in legal work.

Real-world cases highlight the stakes. In Mata v. Avianca, Inc., counsel relied on hallucinated case law generated by an AI tool, repeatedly vouched for its accuracy, and even submitted fabricated excerpts that quoted decisions that never existed. In another example, Shahid v. Esaam, a judge incorporated hallucinated citations into an order after trusting an attorney’s AI‑generated content without independent verification. These incidents show how quickly misinformation can enter the record when AI output isn’t carefully checked.

AI reliability & hallucinations in the courts

Expert guidance on evaluating legal AI reliability.

Learn more ↗

How can courts balance AI adoption with accuracy requirements?

Ensuring accuracy through verification and human oversight

The solution isn’t avoiding AI but implementing robust verification protocols with human oversight at every stage. Professional-grade AI solutions like CoCounsel Legal are specifically designed to address these challenges through:

- Built-in verification features that cross-reference authoritative legal databases

- Citation validation capabilities that ensure accuracy of case law references

- Transparent sourcing that allows users to trace AI-generated content back to original sources

- Professional training on legal reasoning standards rather than generic language patterns

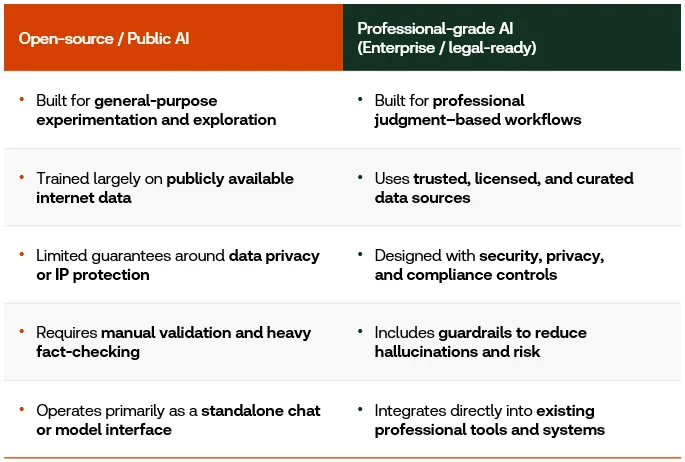

Identifying professional-grade AI versus public AI

Professional-grade AI platforms like CoCounsel Legal incorporate critical safeguards absent from consumer alternatives:

What does integrating AI into legal workflows look like?

AI as an assistant, not an arbiter

AI should enhance—not replace—legal judgment. As Judge Samuel A. Thumma of the Arizona Court of Appeals cautions,

|

– Judge Samuel A. Thumma of the Arizona Court of Appeals

AI is most effective when treated as a research assistant that enhances rather than replaces professional judgment. Thomson Reuters Chief Technology Officer Joel Hron describes this role as acting like “a thought partner or like a critic,” helping test assumptions and broaden perspectives.

Verification protocols courts should implement

Courts should establish comprehensive validation processes that include:

Mandatory review procedures

- Independent verification of all AI-generated citations against authoritative databases

- Confirmation that quoted passages are accurate and properly contextualized

- Validation that legal propositions reflect actual holdings of cited authorities

Technology-assisted verification

Professional legal research providers now offer tools specifically designed to check briefs, judicial orders, and opinions for accurate case citations and quotations. These automated verification systems can flag potential issues for human review while maintaining workflow efficiency.

Training and education

Judicial bodies should establish comprehensive educational programs ensuring that all individuals who interact with the legal system possess foundational knowledge of AI and its appropriate application in legal contexts. While technical expertise is not required, a baseline understanding of AI fundamentals is essential for responsible integration of these tools into judicial processes.

The AI Policy Consortium for Law & Courts, a joint effort between the Thomson Reuters Institute and the National Center for State Courts, provides a role-based learning toolkit that helps enhance AI literacy in the courts.

How can AI improve access to justice?

With appropriate safeguards, AI‑driven tools can meaningfully expand access to justice. Judge Maritza Braswell has emphasized that avoiding AI entirely only widens the gap for people who must navigate the legal system without counsel.

Professional legal AI platforms can support self‑represented litigants by:

- Translating complex legal concepts into plain language

- Providing procedural guidance

- Assisting with basic document preparation

- Offering educational resources that clarify legal processes

At the same time, it’s important to note that greater access does not automatically guarantee fair outcomes. The goal is to ensure that broader access is matched with tools and processes that promote meaningful justice.

What does responsible AI implementation look like?

Responsible AI deployment in legal settings requires three fundamental components:

Human-centric approach. AI must remain under human supervision, never serving as an autonomous decision-maker. Legal professionals retain ultimate responsibility for accuracy, verification, and professional judgment.

Professional-grade tools. Investing in legal-specific AI platforms designed with built-in safeguards is essential. Generic consumer tools lack the verification features, legal training, and professional standards necessary for court use.

Integrated verification workflows. Verification must be embedded throughout the AI lifecycle, not applied as a final check. This includes validation checkpoints when formulating prompts, during initial output review, and before final submission.

Moving forward with AI in the courts

The legal profession doesn’t need to start from scratch. Existing ethical frameworks and professional standards around competence, due diligence, and candor to the court provide an excellent foundation for AI integration.

The key is choosing the right technology partners. CoCounsel Legal represents the professional-grade approach courts need. It is AI specifically trained on legal reasoning, built with verification safeguards, and designed to enhance rather than replace human judgment. As Justice Tanya R. Kennedy of the Appellate Division, First Judicial Department of New York, reminds us: “Whether you are a judge [or] an attorney, credibility is everything, particularly when you come before the court.”

Professional-grade AI helps preserve that credibility while unlocking unprecedented efficiency and capability. Discover how CoCounsel Legal’s professional‑grade AI can elevate your practice with verified, reliable outputs tailored for legal work.