Get to know the product specialists using Westlaw editorial expertise to ensure reliable AI research results

Highlights

- Deep Research uses Westlaw's verified content and human experts to ensure AI accuracy.

- Product specialists grade AI outputs against gold standard responses for legal soundness.

- Human verification by attorney-editors prevents fake citations and maintains professional trust.

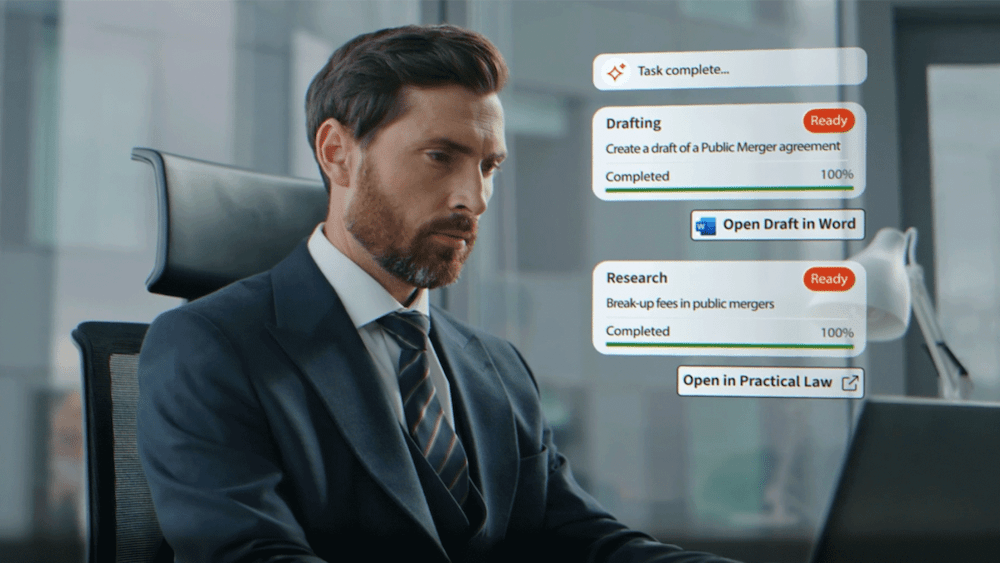

In legal research, the distance between a raw search result and a strategic conclusion can feel immense. Bridging that gap requires more than just processing power; it demands a deep understanding of legal nuance. Deep Research in CoCounsel Legal, grounded in Westlaw, uses advanced agentic AI to automate multi-step research tasks. It functions like an expert legal researcher who sets goals, selects tools, and navigates multiple steps to complete complex research.

But the real engine behind its reliability is a team of dedicated specialists who have spent years refining Westlaw’s most sophisticated tools. Three of these product specialists — Tiffany Lawson, Brian Smith, and Matt Gagnier — use their background in legal editorial work to shape the AI outputs that legal professionals use every day. They ensure that when a lawyer asks a complex question, the AI provides an answer that is not only fast, but legally sound and verified.

Jump to ↓

The human logic behind the AI

Leveraging the Westlaw editorial legacy

Why human verification defines trust

The human logic behind the AI

Tiffany Lawson has spent roughly two years grading AI outputs, a role that involves meticulously reviewing machine-generated responses to ensure they meet professional standards. This isn’t just a basic check for typos — it is a rigorous interrogation of the AI’s legal reasoning.

“My main responsibility on a given day is ultimately just grading those AI outputs for accuracy,” Lawson says. “We base these or compare them against these gold standard responses to verify that it correctly identified the key legal issues and didn’t misrepresent any facts or legal reasonings.”

These “gold standard” responses are a set of 150 to 200 standardized questions where the correct legal answer is already known and vetted. Every time the Deep Research product team makes a change to the model, they run these questions again to see if the AI stays on track.

Brian Smith, who has been involved since the tool’s early stages when it was called AAR and functioned as a basic chatbot, notes how this process guided the product’s growth. “It’s evolved into these longer responses that we’re getting now in Deep Research,” he explains.

Leveraging the Westlaw editorial legacy

What sets Deep Research apart is its foundation. While many AI models scrape the general internet, which can lead to vague or flatly incorrect answers, Deep Research draws exclusively from Westlaw’s verifiable content. This includes KeyCite, the Key Number System, and professionally maintained annotated statutes.

Matt Gagnier, who served on the Deep Research team before transitioning to a new role, emphasizes the importance of using high-quality data.

Our AI is being trained not only with legal expertise, but off of our giant legal database. It is not being trained off of every single thing that exists on the internet. It’s being trained off of a very select database that is then also verified by legal experts.

AI Editorial Senior Program Manager and Product Specialist, Thomson Reuters

This human-led organization helps the AI handle high-level legal concepts, such as circuit splits or jurisdictional disagreements. When reviewing Deep Research outputs, Lawson looks specifically for situations where the AI has identified conflicts in case law. “Our job is to verify that the AI correctly recognized the conflict and accurately represents each position,” she says.

Why human verification defines trust

The collaboration between technical teams and legal experts ensures that Deep Research reflects how legal professionals think. Stories continue to circulate about lawyers submitting briefs with AI-generated fake citations, resulting in court sanctions and professional fines. But Deep Research users can trust that their research draws from verified legal sources, including across the work of 1,200 full-time attorney-editors.

“What excites me is the knowledge that our work gives that extra layer of quality assurances for the users,” says Lawson.

For Smith, the value of this human-in-the-loop approach is clear.

I think the most important part is knowing there’s a quality check going in from actual lawyers. Our customers can rest assured that the answers they’re getting are at least being vetted.

Sr. Attorney Editor and Product Specialist, Thomson Reuters

Looking ahead

As Deep Research continues to grow, the specialists envision improvements that will make the tool even more valuable for practitioners. Lawson and Gagnier would like to work towards more concise responses that remove unnecessary repetition while providing a thorough analysis.

Smith also sees potential for incorporating more secondary sources like treatises and restatements. This would expand the depth of authority Deep Research can cite while maintaining its high accuracy standards. “I think it would be extra helpful to continue increasing the amount of information we can provide,” he says.

“It is a very exciting time in Deep Research,” Lawson adds. “We’re going to continue to grow our products. We’re going to continue to grow as a team to make sure that we’re giving the users the best products.”

Learn more about CoCounsel Legal and experience AI-powered research built on editorial expertise.