The evolution from experimental curiosity to essential practice tool—and the new standards that make it possible

Highlights

- AI hallucinations require legal professionals to adopt rigorous verification standards before trusting content.

- Existing professional rules already govern AI use, emphasizing human oversight and technological competence.

- Systematic verification workflows position responsible AI adoption as a competitive advantage for legal practices.

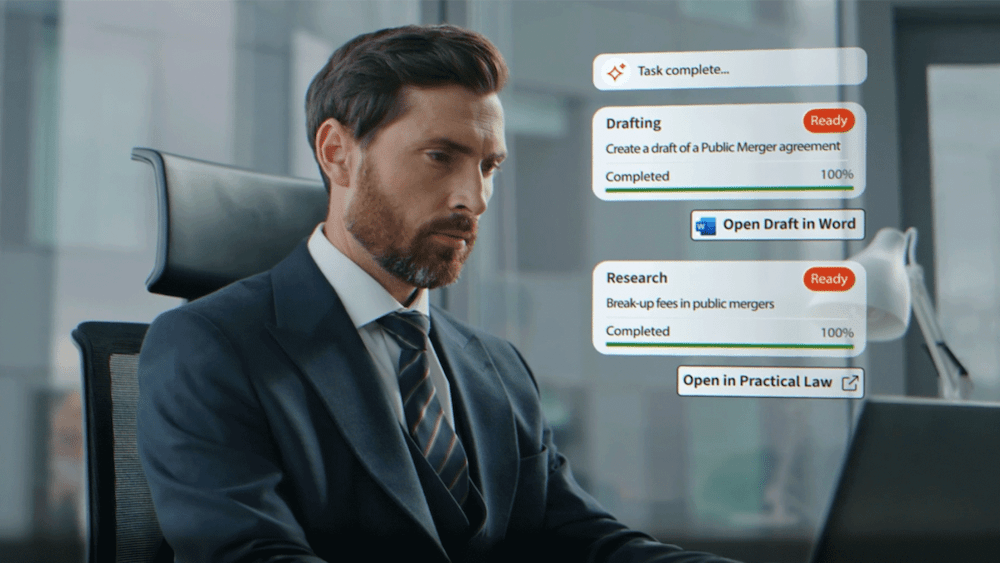

AI has evolved from an experimental curiosity to an essential practice tool, transforming how attorneys conduct research, draft documents, and analyze complex legal issues. Yet this brings challenges alongside its significant benefits, forcing the legal community to confront new questions about accuracy, verification, and professional responsibility.

At the heart of this evolution lies a critical shift in how legal professionals approach AI-generated content. The traditional “trust but verify” methodology, long sufficient for conventional research tools, has proven inadequate for the unique challenges posed by AI hallucinations and fabricated content. Legal practitioners are now embracing a more rigorous “do not trust until verified” standard that’s redefining verification workflows and professional obligations in legal AI implementation.

The implications extend far beyond individual cases, reshaping professional standards, verification workflows, and competitive advantages for those who embrace responsible AI adoption while upholding their professional obligations.

Jump to ↓

Professional standards in the AI era

Building verification-first workflows

Competitive advantage through responsible AI adoption

When judges became vulnerable

The journey to this shift began with three landmark cases that progressively exposed AI’s risks. In Mata v. Avianca, attorneys faced sanctions for submitting fabricated case law generated by an AI tool, establishing the first clear precedent that unverified AI output cannot substitute for legal research and highlighting the critical need for proper AI due diligence.

Thomas v. Pangburn revealed similar vulnerabilities among self-represented litigants, demonstrating how AI hallucinations could burden court resources while highlighting the delicate balance between compassion and accountability that judges must maintain when addressing pro se AI misuse.

However, Shahid v. Esaam decision reinforced existing concerns within the legal community about AI accuracy and verification. When a judge incorporated AI-generated inaccurate content into an official court order, it demonstrated that all participants in the legal system need to exercise caution when using AI tools that may present fabricated information convincingly.

This case represents a fundamental shift that legal experts are observing across the profession, moving from ‘trust but verify’ to ‘do not trust until verified.’

This evolution reflects not just changing technology, but a mature understanding that AI’s capacity for confident-sounding fabrication requires fundamentally different verification approaches than traditional legal research methodologies.

Professional standards in the AI era

Contrary to initial concerns about regulatory gaps, existing legal frameworks already provide robust governance for AI use. Federal Rule of Civil Procedure 11 and its state counterparts establish clear certification requirements for court filings, making attorneys personally responsible for verifying all submitted content, regardless of its technological origin. The ABA Model Rules’ competence requirements, adopted by more than 40 states, already encompass technological literacy as a professional obligation under Rule 1.1.

The California State Bar has taken the lead on professional AI responsibilities, emphasizing that AI must serve as an “assistive tool rather than an authoritative source.” Under this framework, all AI-generated content must undergo independent human review and verification against primary legal authorities, a standard that prioritizes verification, human oversight, and responsible use as core risk management principles.

Critically, the choice of AI platform significantly both reliability and professional liability. General-purpose consumer tools pose substantially higher hallucination risks compared to professional-grade, legal-specific platforms. Professional legal AI tools incorporate curated legal databases, citation verification features, jurisdiction-specific training, and audit trails that consumer alternatives lack. This distinction isn’t merely technical. It’s fundamental to maintaining professional standards while harnessing AI’s efficiency benefits and meeting your ethical obligations to clients.

Building verification-first workflows

The solution isn’t avoiding AI but implementing systematic verification protocols that position human oversight as the essential safeguard in legal technology deployment.

Effective AI integration requires treating technology as a collaborative partner, not an autonomous decision-maker. AI generates initial drafts and research summaries, while human professionals provide critical analysis, verify accuracy, and make final determinations through rigorous AI due diligence processes.

However, expecting manual verification of every AI-generated assertion is unrealistic at scale. You must be equipped with tools that quickly identify likely errors and explain problematic content, while integrating naturally into existing legal workflows.

This framework ensures legal professionals maintain ultimate responsibility while leveraging AI’s efficiency benefits through these key elements:

- Mandatory review procedures for all AI-generated work products

- Professional skepticism training on AI hallucinations

- Fact-checking protocols for citations and legal precedents

- Expert validation processes for complex arguments

- Specialized legal AI tools with built-in verification features

- Documentation requirements for verification processes

- Clear AI use policies aligned with ethical guidelines

This transforms verification into a core component of AI-assisted legal work, ensuring efficiency never compromises accuracy or professional responsibility.

Competitive advantage through responsible AI adoption

As courts nationwide grapple with AI implementation, the legal community needs more than cautionary tales. You need practical guidance that works. The transformation from experimental curiosity to essential practice tool demands a comprehensive understanding of both opportunities and risks.

Drawing from interviews with federal judges, state bar attorneys, and subject experts, our full report provides the practical insights legal professionals need to navigate AI integration successfully. This isn’t just about avoiding hallucinations. It’s about positioning your practice at the forefront of legal innovation while upholding the highest professional standards through comprehensive AI due diligence.

Download Responsible AI use for courts: Minimizing and managing hallucinations and ensuring veracity to access the complete analysis, expert recommendations, and implementation frameworks that will help you integrate legal AI responsibly starting today.

Responsible AI use for courts

Minimizing and managing hallucinations and ensuring veracity

Download report ↗