Overview addressing the ethics, use, acquisition, and development of AI and key affected practices areas

Reviewed by Jessica Brand, Sr. Specialist Legal Editor, Thomson Reuters | Originally published March 1, 2024

Highlights

- American Bar Association (ABA) guidance on using GenAI emphasizes that lawyers must understand their ethical obligations

- Attorneys should consider including confidentiality provisions in discovery agreements to protect confidentiality

- Procedural and substantive issues span practice areas such as product liability, data protection, intellectual property, bankruptcy, employment law, and antitrust US federal agencies like the SEC, FTC, and FCC have issued rules and guidance to regulate specific AI applications

Many legal software tools are incorporating artificial intelligence (AI) to enhance their performance. Generative AI (GenAI) is a type of AI that is trained on existing content to create new images, audio, video, computer code and, most important for lawyers, written texts.

Examples of GenAI for consumer and general -use include popular AI tools such as ChatGPT, Copilot, Gemini, Midjourney, and DALL-E.

Large language models (LLMs) are a subset of GenAI that usually focus on text. You may see either or both of these terms in discussions of lawyers’ ethical responsibilities when using AI tools.

Lawyers need to understand the risks involved in using GenAI for legal work. As the use of GenAI is reinventing the legal profession, the American Bar Association (ABA), state and local bar associations, state legislators, and the courts are starting to weigh in and issue rules and guidance on the ethical use of GenAI.

Jump to ↓

ABA guidance on GenAI legal issues

What significant risks does AI present for litigators?

What are the primary ethical risks of using GenAI improperly?

What types of procedural and substantive issues are likely to arise?

Key GenAI legal issues by practice area

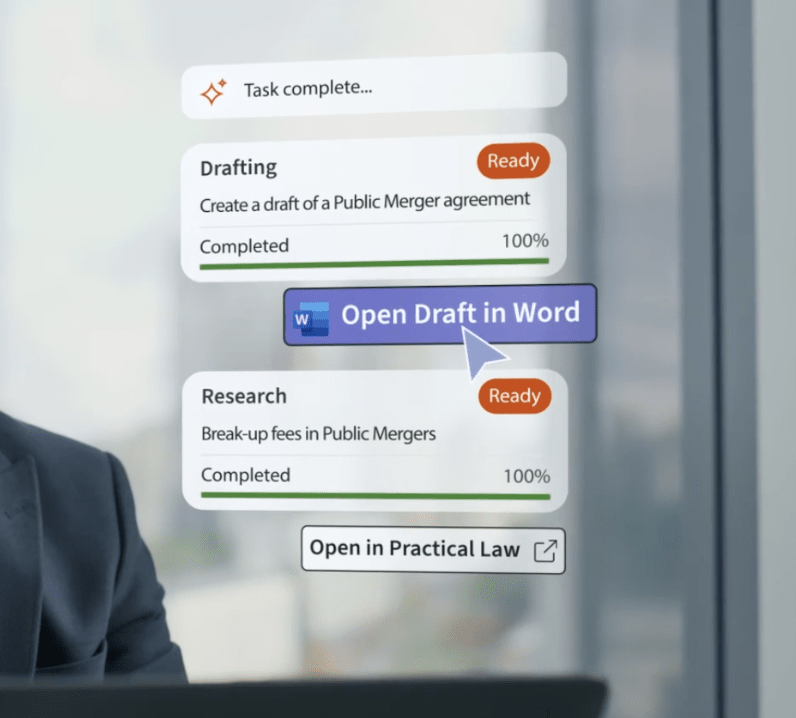

CoCounsel Legal

Trusted content, expert insights, and AI solutions with ISO 42001 certification — the global standard for risk management, data governance, and responsible AI practices

Go professional-grade AI ↗ABA guidance on GenAI legal issues

In July 2024, the ABA issued its first formal opinion on ethical issues raised by lawyers’ use of GenAI. The opinion provides general guidance for what it calls an “emerging landscape” and notes that the ABA and state and local bar associations will likely update their guidance as GenAI tools develop further.

The opinion states that lawyers using AI must “fully consider” their ethical obligations, including:

- Providing competent legal representation

- Protecting client information

- Communicating with clients

- Supervising employees and agents

- Advancing only meritorious claims and contentions

- Ensuring candor toward the tribunal

- Charging reasonable fees

State and local bar association guidance on GenAI legal issues

Some, but not all, state bar associations have issued guidance, ranging from unofficial to formal. Some of the state bars that have not yet issued recommendations are currently exploring AI legal issues and are likely to issue some form of guidance in the near future.

“Courts may require attorneys seeking admission of GenAI evidence to provide a description of the software or program used and proof that the software or program produced reliable results in the proposed evidence.”

Sr. Specialist Legal Editor, Thomson Reuters

What significant risks does AI present for litigators?

There are two primary categories of risk for AI usage:

- Output risks — Information generated by the AI system may be risky to use.

- Input risk — Information may be put at risk by being input into an AI system.

What are GenAI output risks?

Hallucinations

LLMs can hallucinate, producing incorrect answers with a high degree of confidence. Hallucinations are common with general-use AI tools, such as ChatGPT, and may even be becoming more frequent in the tools’ latest versions.

GenAI tools designed specifically for legal research are built to increase accuracy and limit hallucinations. These legal research tools are trained to only retrieve trusted legal data.

While more reliable than general-purpose LLMs, it’s still the lawyer’s ethical responsibility to check the output of any GenAI tool it uses for accuracy.

In 2023, a judge famously fined two New York lawyers and their law firm for submitting a brief with GenAI-generated fictitious citations. This was the first in a series of cases involving GenAI hallucinations in court documents, including a Texas lawyer sanctioned for similar reasons in 2024.

White paper

The pitfalls of consumer-grade tech and the power of professional AI-powered solutions for law firms

View white paper ↗Bias

Lawyers should be aware of the potential for bias in GenAI results, which can creep in from various sources, such as:

- Biased training data, including historical sources that incorporate the biases of their times

- Incomplete training data

- Algorithm errors

- The use of information that would not otherwise be allowed into a decision process, such as in hiring

- Data drawn from a limited or different geographical area

What are GenAI input risks?

The greatest input risk is breach of confidentiality. While new functionalities are emerging to address privacy issues, such as allowing users to turn off chat histories and prevent information they enter from being used to train the platform, not all LLMs have had such upgrades.

A law firm may want to sign a licensing agreement with the GenAI provider or the platform that incorporates the GenAI that has strict confidentiality provisions that explicitly prevent uploaded information from being retained or accessed by unauthorized persons.

Even with such an agreement, legal professionals should still regard an LLM as being potentially insecure, and they should never put any confidential information into a public model, such as ChatGPT.

Practical Law Practice Note

Artificial Intelligence Key Legal Issues: Overview

Access with free trial ↗What are the primary ethical risks of using GenAI improperly?

Just as litigators can incur penalties for the conduct of non-attorneys they supervise or employ, they can be charged with several ethical violations if they use GenAI to assist them in legal work without proper oversight.

Misunderstanding technology

Attorneys have an ethical obligation to understand a technology before using it, stemming from their duty to provide a client competent representation.

That includes:

- Keeping up with changes in relevant technology

- Reasonably understanding the technology’s benefits and risks

- Several jurisdictions have specifically incorporated into their ethical rules a duty of “technological competence,” which covers the use of GenAI.

Failure to protect confidentiality

Lawyers using GenAI systems could inadvertently disclose confidential information.

As Thomson Reuters’ Rawia Ashraf points out, “When litigators use generative AI to help answer a specific legal question or draft a document specific to a matter by typing in case-specific facts or information, they may share confidential information with third parties, such as the platform’s developers or other users of the platform, without even knowing it.”

The ABA’s formal opinion notes that it’s difficult to predict the risk of disclosure because of the uncertainty about what happens to information that’s input into an AI tool.

The opinion states that “as a baseline,” lawyers should read and understand the tool’s terms of use, privacy policy, and other relevant contractual terms and policies to learn who can access the information they enter.

Alternatively, they could consult with a knowledgeable colleague or an external IT or cyber security expert who fully understands how the GenAI tool is using the information.

What types of procedural and substantive issues are likely to arise?

The growing use of GenAI is creating new procedural issues. Courts will need to develop strategies to address the problem of authenticating AI-generated evidence.

The use of GenAI could inspire a wave of litigation concerning substantive issues, including:

- Legal malpractice

- Copyright

- Data privacy

- Consumer fraud

- Defamation

Key GenAI legal issues by practice area

GenAI and LLMs have implications for virtually every industry that a lawyer may represent and can affect nearly any type of transaction or practice of their clients.

Commercial transactions involving GenAI

When GenAI becomes a core part of commercial transactions, unique negotiation issues may arise.

For example:

- Representations and warranties — Does a vendor’s representatives and warranties concerning its GenAI system’s performance adequately address the potential business impacts of a system failure?

- Indemnification — If a GenAI system’s decision-making process results in a liability, how do you determine whether the GenAI provider or its user caused the event giving rise to liability?

- Limitations of liability — If a GenAI data analytics system inadvertently discloses user information, will the commercial provider face third-party data breach claims?

GenAI and product liability

Product liability cases involving GenAI technology may rely on traditional product liability principles.

For example, in Cruz v. Raymond Talmadge d/b/a Calvary Coach, a bus struck an overpass. The injured plaintiffs brought claims against the manufacturers of the GPS device that the bus driver had used, based on traditional theories of negligence, breach of warranty, and strict liability.

However, cases where the AI technology is more fully autonomous can raise novel product liability issues. In Nilsson v. Gen. Motors, LLC, Jan. 22, 2018 (No. 18-471) (N.D. Cal.), a motorcyclist injured by a self-driving vehicle sued the manufacturer, alleging that the vehicle itself was driving negligently.

The manufacturer admitted that the vehicle was required to use reasonable care. The case settled before pleadings, but the novel issues raised are likely to recur in the future.

For example, is the GenAI product itself the actor and, if so, what is the applicable standard of care governing the AI — a reasonable human versus a new “reasonable machine” standard.

How should courts treat foreseeability when a GenAI product is intended to act autonomously. And if products themselves can be liable, who compensates the injured party?

Data protection and privacy issues when using AI

Organizations using personal information in AI may struggle to comply with state, federal, and global data protection laws, such as those that restrict cross-border, personal information transfers.

Some countries, particularly those in the EU, have comprehensive data protection laws that restrict AI and automated decision-making involving personal information.

Others, such as the U.S., don’t have a single, comprehensive federal law regulating privacy and automated decision making. Parties must be aware of all relevant federal and state laws, such as the federal Fair Credit Reporting Act (FCRA) and the California Privacy Rights Act of 2020.

“Because opposing counsel may upload discovery documents and information into GenAI for a variety of purposes, including summary and analysis, attorneys should consider including confidentiality provisions in discovery agreements to protect against the inadvertent disclosure or retention of their clients’ confidential information.”

Sr. Specialist Legal Editor, Thomson Reuters

GenAI as intellectual property

Companies creating GenAI and related technology may face unique intellectual property (IP) issues.

Among these are:

- How to protect GenAI IP, such as registering patents, filing copyrights, or claiming GenAI use as a trade secret

- How to determine ownership of GenAI IP

- How to determine whether a company is the victim of GenAI IP infringement

GenAI in bankruptcy

While the Bankruptcy Code doesn’t specifically define AI, treatment of AI in bankruptcy appears to be straightforward when the debtor owns the AI software and does not license it to a third party.

The AI is considered a property of the debtor’s estate under section 541 of the Bankruptcy Code, so the debtor can sell it free and clear of all claims by third parties.

Complications may arise if a debtor has instead licensed AI software to a third party prior to bankruptcy and if the license is deemed an executory contract subject to the debtor licensor’s right to reject, or assume, or assign the agreement.

GenAI in the workplace

Modern workplaces are increasingly reliant on GenAI to perform human resources functions. Biases and other issues in AI systems used for employee recruitment and hiring can run afoul of anti-discrimination laws:

- The training data for a GenAI system may contain information about applicants that employers are not legally allowed to ask on employment applications or during interviews.

- The GenAI could duplicate past discriminatory practices, for example, by using geographical location as a factor in decision-making, which could inadvertently skew results based on race or other protected characteristics.

- GenAI tools could have a discriminatory impact on individuals with a disability.

AI tools could also increase the chances of disparate impact discrimination, where members of a protected class are adversely affected by a seemingly neutral employment practice.

A recent age-discrimination lawsuit one such case, alleging that an AI applicant-screening tool disproportionately screens out applicants based on age, race, and disability.

GenAI used for onboarding employees also poses potential issues because it could potentially violate employees’ privacy rights.

2025 Generative AI in Professional Services Report

The future impact on legal; tax, accounting, and audit; risk and fraud; and government professionals’ work and businesses

View report ↗GenAI legal issues regarding health plans, HIPAA compliance, and retirement plans

The use of GenAI to recommend options to plan participants raises compliance and security issues under the Health Insurance Portability and Accountability Act (HIPAA).

Similar issues arise under the Employee Retirement Income Security Act (ERISA) with the use of GenAI in retirement plans.

Concerns include meeting fiduciary duty requirements, monitoring GenAI, and assessing whether sponsor fees and expenses are reasonable in light of their use of GenAI.

Antitrust considerations when using AI

GenAI use carries a number of potential antitrust risks, particularly related to unlawful anticompetitive behavior. GenAI systems could be used to facilitate price-fixing agreements among competitors.

The Department of Justice has already secured guilty pleas from parties using pricing algorithms to fix prices for products sold in e-commerce.

Antitrust risks could also arise from GenAI systems themselves engaging in anticompetitive behavior or otherwise seeking to lessen competition. For example, an GAI system could develop sufficient learning capability upon assimilating market responses and then conclude that colluding with a competing GenAI system is the most efficient way for a company to maximize profits.

Future of Professionals Report 2025

Survey of 2,275 professionals and C-level corporate executives from over 50 countries

View report ↗Bottom line

Notwithstanding the many benefits GenAI and LLMs promise for litigators, there is a substantial amount of risk.

Legal professionals must be able to identify key issues concerning AI, how they’re developing, and how they may be used in the future.

More resources:

- AI Toolkit (US)

- AI and Machine Learning: Overview

- Generative AI in Litigation: Overview

- AI Key Legal Issues: Overview (US)

- AI and Legal Ethics

- Certification Regarding Generative AI for Court Filings

- Confidentiality Provision Regarding Generative AI

- Law Firm Letter to Client Requesting Consent to Use Generative AI Tools

- Generative AI: Preserving the Attorney-Client Privilege and Work Product Protection

- Generative AI Ethics for Litigators